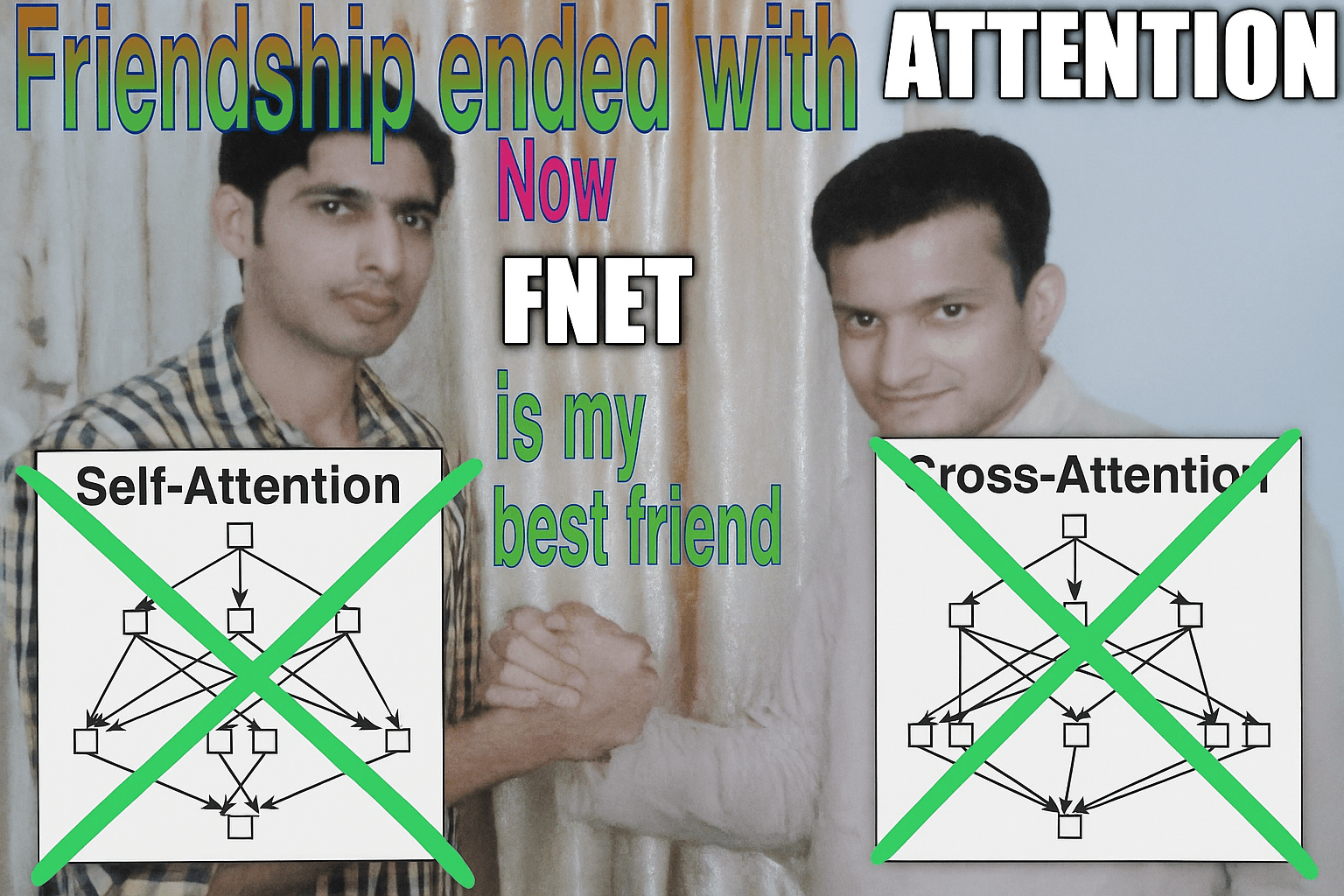

Friendship over with Attention. Now FNet is my best friend

Have a knack for thinking outside the box and always looking for the next big idea.

The Transformer architecture, introduced in the seminal paper "Attention Is All You Need", has revolutionized AI, particularly in Natural Language Processing (NLP). Its success hinges on the self-attention mechanism, which allows the model to dynamically weigh the importance of different words or tokens in an input sequence relative to each other, capturing context and long-range dependencies effectively.

The Attention Bottleneck: Power vs. Price

Think of self-attention like this: for every word in a sentence, the model calculates an "attention score" indicating how relevant every other word is to understanding that specific word's meaning in context. This is done using Query (Q), Key (K), and Value (V) projections derived from the input embeddings. The scores determine how much information from other words (Values) should be blended into the current word's representation.

While incredibly powerful, this pairwise comparison is computationally intensive. The complexity scales quadratically (O(N²)) with the sequence length (N) in terms of both computation and memory. This means doubling the input length (e.g., from a paragraph to a full document) quadruples the resources needed for the attention layers. This quadratic scaling becomes a major bottleneck for:

Training: Making it expensive and time-consuming to train models on large datasets or long sequences.

Inference: Limiting the speed at which models can process long inputs in real-time applications.

Deployment: Making it challenging to run large Transformers on resource-constrained hardware like smartphones or embedded systems (AI at the Edge).

Enter FNet: Computing with Fourier Transforms

Researchers at Google AI proposed a startlingly efficient alternative in their paper "FNet: Mixing Tokens with Fourier Transforms". Their model, FNet, completely replaces the computationally heavy self-attention layers within the Transformer encoder block with a standard, parameter-free Fourier Transform.

What's a Fourier Transform? Originating in signal processing, the Discrete Fourier Transform (DFT) decomposes a sequence (like the sequence of token embeddings) into its constituent frequencies. It essentially reveals the underlying periodic patterns or "resonances" within the data. The Fast Fourier Transform (FFT) is simply a highly efficient algorithm for computing the DFT, reducing its complexity from O(N²) to O(N log N).

In FNet, the FFT is applied to mix information across the token sequence. The intuition is that analyzing the frequency components provides a form of global context, capturing interactions between tokens without the need for explicit pairwise comparisons. It's a shift from learning adaptive relationships (attention) to leveraging a fixed, mathematically defined structure (FFT) for mixing information globally. FNet applies the FFT along both the sequence and hidden dimensions, taking only the real part of the complex-valued output for simplicity and empirical effectiveness.

Scalable Performance, Huge Speedups

The results published in the FNet paper were compelling:

Speed: FNet trains significantly faster than standard BERT-Base models – up to 80% faster on GPUs and 70% faster on TPUs for typical sequence lengths (512 tokens). O(N log N) scaling makes it increasingly advantageous for longer sequences.

Accuracy: Despite its simplicity and lack of parameters in the mixing layer, FNet achieves 92-97% of BERT's accuracy on the diverse tasks within the GLUE benchmark. While there's a slight accuracy trade-off compared to the best attention-based models, its performance demonstrates remarkable viability.

Hybrid Approach: An "FNet-Hybrid" model, strategically replacing only some attention layers with FFT layers (specifically, keeping attention only in the final two layers), recovered most of the performance gap, reaching 99% of BERT's accuracy. This highlights a potential synergy, using FFT for efficient global mixing and attention for finer-grained, adaptive refinement.

Long Sequences (LRA): When tested on the Long Range Arena (LRA) benchmark, designed specifically for evaluating models on long-context tasks, FNet matched the accuracy of top-performing "efficient Transformer" variants (like Reformer, Performer) while being significantly faster and more memory-efficient than all competitors on GPUs across the tested sequence lengths.

Experiments confirmed key design choices: mixing is crucial (removing it entirely fails), the FFT's structure provides a useful inductive bias (random mixing underperforms), and adding learnable parameters to the FFT itself didn't help, reinforcing the value of the fixed transform.

Market Implications

FNet's efficiency isn't just an academic curiosity; it has tangible implications:

Short-Term: The dramatic reduction in computational cost immediately benefits latency-sensitive applications (real-time translation, content moderation) and enables more powerful AI at the Edge. Running complex NLP models directly on devices becomes more feasible, improving privacy and reducing reliance on cloud connectivity. Companies offering AI infrastructure (like Clika) can leverage such architectures to provide faster, cheaper inference solutions.

Long-Term: FNet and similar research signal a potential shift away from monolithic, attention-heavy models towards more diverse, efficient architectures. This could foster innovation in areas like low-power LLMs and truly ubiquitous on-device intelligence. It challenges the economic moat of large cloud AI providers whose value relies partly on managing attention's complexity at scale. Furthermore, it underscores that architectural innovation can sometimes bypass the need for purely brute-force compute scaling (i.e., ever-larger GPU clusters), potentially impacting hardware markets. This trend aligns with a move towards more distributed and decentralized AI systems.

Jevons' Paradox: The idea that increasing efficiency in resource use can sometimes lead to an overall increase in resource consumption because the lower cost spurs greater demand. In this context, making Transformer-like models much cheaper and faster could dramatically increase their adoption and the variety of applications they're used for, potentially leading to more overall AI computation, even if individual tasks are more efficient.

The Bigger Picture: Democratizing AI

FNet exemplifies how revisiting fundamental mathematical tools can lead to breakthroughs in efficiency. By demonstrating that a parameter-free FFT can effectively replace complex self-attention for many tasks, it challenges core assumptions in model design. This focus on efficiency is crucial for democratizing AI, making powerful models more accessible, affordable, and deployable in a wider range of environments, especially beyond large data centers. While attention remains state-of-the-art for peak performance, FNet proves that highly efficient alternatives are viable, paving the way for a future

with smarter, leaner AI.